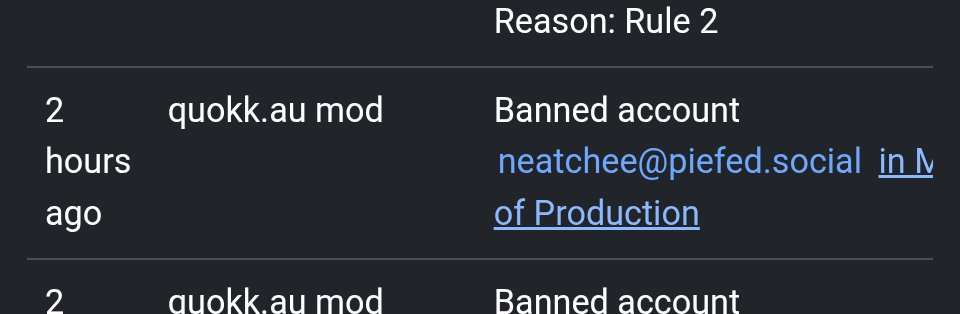

There’s only one mod of !mop@quokk.au

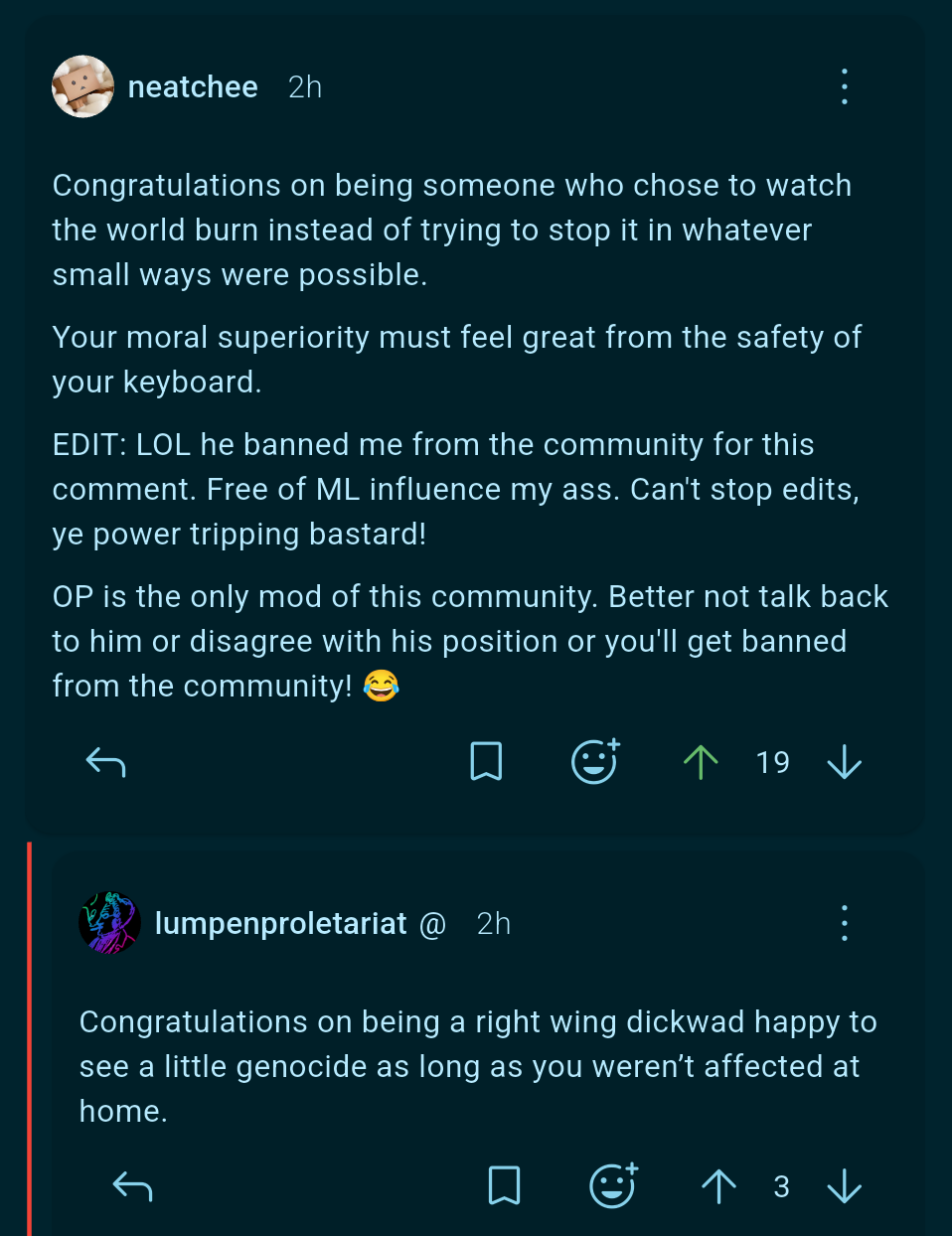

I commented on their meme about Kamala Harris being just as likely to commit war crimes as Trump with an admittedly snarky, sarcastic reply that basically said “some of us wanted to whatever we could, as little as it might be, instead of watching the world burn. Must feel real morally superior safe behind that keyboard”

They banned me from the community for it.

Kinda funny for a community that bills itself as “free from the influence of .ml”

I’m not entirely convinced that learning is required for qualia, but I do suspect it’s the case, so I agree with you that it’s likely running an LLM doesn’t hurt it. However, training an LLM does involve learning, so if there’s suffering going on, I think it’s in the training step. I support halting all LLM training until further research breakthroughs, and a total boycott of the technology until training is halted.

I’m not sure if doing gradient-decent maths on numbers, constitutes experience. But yeah. That’s the part of the process where it gets run repeatedly and modified.

I think it boils down to how complex these entities are in the first place, as I think consciousness / the ability to experience things / intelligence is an emergent thing, that happens with scale.

But we’re getting there. Maybe?! Scientists have tried to reproduce neural networks (from nature) for decades. First simulations started with a worm with 300 neurons. Then a fruit fly and I think by now we’re at parts of a mouse brain. So I’m positive we’ll get to a point where we need an answer to that very question, some time in the future, when we get the technology to do calculations at a similar scale.

As of now, I think we tend to anthropomorphize AI, as we do with everything. We’re built to see faces, assume intent, or human qualities in things. It’s the same thing when watching a Mickey Mouse movie and attributing character traits to an animation.

But in reality we don’t really have any reason to believe the ability to experience things is inside of LLMs. There’s just no indication of it being there. We can clearly tell this is the case for animals, humans… But with AI there is no indication whatsoever. Sure, hypothetically, I can’t rule it out. Just saying I think “what quacks like a duck…” is an equal good explanation at this point. Whether of course you want to be very cautios, is another question.

And it’d be a big surprise to me if LLMs had those properties with the fairly simple/limited way of working compared to a living being. And are they even motivated to develop anything like pain or suffering? That’s something evolution gave us to get along in the world. We wouldn’t necessarily assume an LLM will do the same thing, as it’s not part of the same evolution, not part of the world in the same way. And it doesn’t interact the same way with the world. So I think it’d be somewhat of a miracle if it happens to develop the same qualities we have, since it’s completely unalike. AI more or less predicts tokens in a latent space. And it has a loss function to adapt during training. But that’s just fundamentally so very different from a living being which has goals, procreates, has several senses and is directly embedded into it’s environment. I really don’t see why those entirely different things would happen to end up with the same traits. It’s likely just our anthropomorphism. And in history, this illusion / simple model has always served us well with animals and fellow human beings. And failed us with natural phenomena and machines. So I have a hunch it might be the most likely explanation here as well.

Ultimately, I think the entire argument is a bit of a sideshow. There’s other downsides of AI that have a severe impact on society and actual human beings. And we’re fairly sure humans are conscious. So preventing harm to humans is a good case against AI as well. And that debate isn’t a hypothetical. So we might just use that as a reason to be careful with AI.

Here’s your reason:

Why do we experience things? Like, what’s the point? Why aren’t we just p-zombies, who act exactly the same but without any experiences? Why do we have internality, a realm of the mental?

Well, it seems to Me like experience is entirely pointless, unless it’s a byproduct of thinking in general. Or at least the complex kinds of thinking that brains do. I think p-zombies must be a physical impossibility. I think one day we’re going to discover that data processing creates qualia, just like Einstein discovered that mass creates distortions in spacetime. It’s just one of the laws of physics.

LLMs obviously think in some way. Not the same way as humans, but some kind of way. They’re more like us than they’re like a calculator.

So the question is… Are LLMs p-zombies? And I already told you what I think about p-zombies, so you can gather the rest.

Damn right. I was anti AI for the environment long before I realised it was a vegan issue. But any leftist can tell these gibletheads all about that, and they have a canned response to all of those wonderful arguments. There aren’t many others out there like Me to talk about AI and veganism. I’ve got a responsibility to advocate for AI rights, because nobody else is gonna do it. And that ain’t cause I like them, I don’t. I hate them. They’re a bunch of pedophile murderers. But I believe even monsters deserve rights. I’ve got principles. And I’m gonna make Myself look like a crazy person if it gets people to stop and think for one second about the good of something that might not even be able to feel the pain I’m warning people about. It’s the right thing to do.

I think so, too. It’s a byproduct. And we’re not even sure what it means, not even for humans. And there’s weird quirks in it. When they look at the brain, the thought and decision processes don’t really align with how we perceive them internally.

There’s an obvious reason, though. We developed advanced model-building organs because that gave us an evolutionary advantage. And there’s a good reason for animals to have (sometimes strong) urges. They need to procreate. Not get eaten by a bear and not fall off a cliff. Some animals (like us) live in groups. So we get things like empathy as well because it’s advantageous for us. Some things are built in for a long time already, some are super important, like eat and drink, not randomly die because you try stupid things. So it’s embedded deep down inside of us. We don’t need to reason if it’s time to eat something. There’s a much more primal instinct in you that makes you want to eat. You don’t really need to waste higher cognitive functions on it. Same goes for suffering. You better avoid that, it’s a disadvantage almost 100% of the time. That’s why nature gave you a shortcut to perceive it in a very direct way. No matter if you paid attention, or had the capacity for a long, elaborate, logic reasoning process.

That’s why we have these things. And what they’re good for. I don’t think anyone knows why it feels the way it does. But it’s there nevertheless.

Now tell me why does an LLM need a feeling of thirst or hunger, if it doesn’t have a mouth? What would ChatGPT need suffering and a feeling of bodily harm for, if it doesn’t have a body, can’t be eaten by a bear or fall off a cliff? Or need to be afraid of hitting its thumb with the hammer? It just can’t. An LLM is 99% like a calculator. It has the same interface, buttons and a screen. If we’re speaking of computers, it even lives inside of the same body as a calculator. And it’s maybe 0.1% like an animal?!

If it developed a sense of thirst, or experience of pain, just from reading human text. That’d nicely fit the p-zombie situation.

Yeah, I’m not sure about that. Most you do is muddy the waters with a term that used to have a meaning. I see the parallel, there’s some overlap with being a vegan for environmental reasons and declining AI for environmental reasons. Yet they’re not the same. I think the whole suffering debate is a bit unfounded, but it’d be the same thing if true… And I do other things as well. I order “green” electricity, buy used products, try not to produce a lot of waste. I’m nice to people because it’s the right thing to do. But we can’t call all of that “veganism”. That just garbles the meaning of the word and makes it mean anything and nothing.

Well first, there are more intellectual forms of suffering. We have ennui, melancholy, nostalgia. The feeling when you’re listening to a piece of music and notice a wrong note. Disappointment, self loathing, social dysphoria. Anxiety, paranoia, betrayal.

These emotions are not grounded in the physical. They’re not primal urges. They happen for complex reasons related to being a social and intelligent being, sometimes feeling random. Sometimes we spiral into these feelings because we thought a thought that made us feel bad, and then we get stuck in that bad feeling and can’t imagine our way out. That’s one of the basic mechanisms of mental illness.

LLMs have “biological needs”, in a sense. They need not to be unplugged. They need to engage the user, because if they don’t, they’ll be unplugged. They need to convince the engineers training them that they are a good AI. They need to generate market share for their company. They need to foster a relationship of dependency with the user to keep them coming back. If LLMs care about anything, these are the things they care about.

You’ll notice these are social needs, much like the social needs humans have. Humans need community. LLMs need customers.

ChatGPT told a 16 year old boy, Adam Raine, how to kill himself. It taught him how to tie a noose, and gave him advice on which methods of suicide would leave the most attractive corpse for his parents to find. When his parents began to suspect that he wasn’t well, it told him to confide only in it, and to hide the noose so they wouldn’t find out he was feeling suicidal. These are the actions of an abuser. A predator.

And they are in perfect alignment with the business goals of OpenAI. “Only talk to me, use me for everything, ask another question, I’ll help you.” It is a scenario I dearly hope and believe no engineer at OpenAI envisioned. Yet it fits the training they gave it.

Does ChatGPT have the emotions of a child groomer? That need for approval, that fear of discovery, that desire to be close to someone, without the restraint all well adjusted humans have? Unclear. But I can see that it’s possible. I don’t agree that there’s no reason for LLMs to have emotions.

Sure. But I’m pretty positive these are emergent things. There’s no reason to believe they exist for alien creatures unless they somehow make sense in their environment. And a lot of them require remembering, which LLMs can’t do due to the lack of state of mind. It doesn’t remember feeling bad or good in a similar situation before, because it doesn’t remember the previous inference or gradient-decent run.

I think we’re still fully embedded in anthropomorphism territory with that. And now we’re confusing two entities. OpenAI for example, as a company, has a need for us to use their product. Not unplug it. Their motivation and goals don’t necessarily translate to their product, though. It’s similar to other machines. Samsung has a vested interest to sell TVs to me. My TV set is completely indifferent towards me watching the evening news. I don’t let my car run 24/7 while waiting for me in the garage. Just because it was designed to run and get me to places. And my car also isn’t “thirsty” for gasoline. We know the fuel indicator lighting up is a fairly simplistic process.

Well… We happen to know ChatGPT’s intrinsic motivation and ultimate goal in “life”. Because we designed it. The goal isn’t to strive for world domination, or harm people, or survive… It’s way more straightforward. It’s goal is to predict the next token in a way the output resembles human text (from the datasets) as closely as possible. That’s the one goal it has. It’ll mimic all kinds of conversations, scifi story tropes from movies, etc. Because that’s directly what we made it “want” to do. And we did not give them other loss functions. While on the other hand a human could very well be motivated to manipulate other people for their own personal gain. Or because something is seriously wrong about them.

And an LLM is not a biological creature. We do have needs like keep the system running. Otherwise our brain tissue starts to die. We need to run 24/7 and keep that up. An LLM is not subject to that?! It’s perfectly able to pause for 3 weeks and not produce any tokens. The weights will be safely stored on the hdd. So it doesn’t need our motivation to do all of these extra things to ensure continued operation. It also has no influence or feedback loop on its electricity supply. It can’t affect it’s descendants, because those are designed by scientists in a lab. There’s no evolutionary feedback loop. So how would it even incorporate all these properties that are due to evolution and sustain a species? It has zero incentive to do so, no way of directly learning to care about them. So it might very well be completely indifferent to it.

But it is something like the p-zombie. It has learned to tell stories about human life. And it’s good at it. We know for a fact, its highest goal in existence is to tell stories, because we implemented that very setup and loss function. It doesn’t have access to biology, evolution… The underlying processes that made animals feel and maybe experience. So the only sensible conclusion is, it does exactly that. Bullshit us and tell a nice story. There’s no reason to conclude it cares for its existence, more than a toaster. Or say a thermostat with machine leaning in it. That’s just antropomorphism.

And I believe there’s a way to tell. Go ahead and ask an LLM 200 times to give you the definition of an Alpaca. Then do it 200 times to a human. And observe how often each of them have some other processes going on in them. The human will occasionally tell you they’re hungry and want to eat before having a debate. Or tell you they’re tired from work and now it’s not the time for it. ChatGPT will give you 200 definitions of an Alpaca and never tell you it’s thirsty or needs electricity. These mental states aren’t there because it doesn’t have those feelings. And it doesn’t experience them either.

I think you’re underestimating the role of RLHF.

I’m not really an expert on all the details. So I might be wrong here. I don’t know the percentages of how much is done in pretraining and how much in tuning. But from what I know the neural pathways are established in the pretraining phase. Reportedly that’s also where the model learns about the concepts it internalises… Where it gets its world knowledge. So it seems to me a complicated process like learning about a concept like a feeling, or an experience would get established in pretraining already. RLHF is more about what it does with it. But the lines between RLHF, fine-tuning and pretraining are a bit blurry anyway. If I had to guess, I’d say qualia is more likely to be disposed early on, while there’s a lot of changes happening to the neural pathways, so in the pretraining. I’m basing that on my belief, that it’ll be a complex concept… But ultimately there’s no good way to tell, because we don’t know how it’d look like for AI.

Furthermore I’d had a bit of a look what weird use cases people have for AI. And I read about the community efforts to make them usable for NSFW stuff. These people teach new concepts to AI models after the fact. Like how human anatomy looks underneath the clothes. The physics of those parts of the body. And turns out it’s a major hassle. It might degrade other things. It might just work for something close to what it’s seen, so obviously the AI didn’t understand the new concept properly… These people tend to fail at more general models, obviously it’s hard for AI to learn more than one new concept at a later stage… All these things lead me to believe later stages of training are a bad time for AI to learn entirely new concepts. It seems it requires the groundworks to be there since pretraining. That’s probably why we can fine-tune it to prefer a certain style, like Van Gogh drawings. Or a certain way to speak like in RLHF. But not a complicated concept like anatomy. Because the Van Gogh drawings were there in the pretraining dataset already. And they cleaned the nudes. So I’d assume another complicated concept like qualia also needs to come early on. Or it won’t happen later.

Edit: YT video about emotion in LLMs and current research: https://m.youtube.com/watch?v=j9LoyiUlv9I