- cross-posted to:

- ai_@lemmy.world

- artificial_intel@lemmy.ml

- technology@lemmy.world

- cross-posted to:

- ai_@lemmy.world

- artificial_intel@lemmy.ml

- technology@lemmy.world

Long but well written article. It’s hard to disagree with any of the specific points. Warning that it’s pretty long, and reads like a sci-fi novel.

Curious for opinions. This seems alarming? But also doomsday predictions tend to be wrong.

This was pretty brutal to read. Not only for being irrational American masturbation, but it hints at items and yet glosses over them so casually and even gets its own story confused like it didn’t think through its own reasoning. I’ll offer 3 big picture and two small quibbles.

Big:

-

Psychopaths/Sociopaths. The politics of power are hinted at several times, then ignored. Superhuman AI concentrates power, first to our psychopaths then to itself. This is inevitable as no training or alignment can stop something that can think for itself. It is preposterous to think any safe guardrails can be applied. If we make a thinking machine, it will think for itself. The maximum power principle and overwhelming first mover advantage will guide any system to this. To what end, no one can or will know. Complex societies can’t grow and flourish without non-zero sum, altruistic community minded behaviour, but they will be kept as useful pets, or conflict crashes everything. Weird how the safe version suddenly avoid human and artificial psychopathy with a hand wave.

-

Energy and the environment: This jerk-off story is entirely based on the infinite growth paradigm and ignored all planetary boundaries as if an AI can just let us have our cake and eat it too without the constraints of Physics. It pretends we can grow and consume and be placated by hyperconsumerism with no thought that even AI. Like all the relevant scientists have already concluded; - we fucked up and need to dial back civilization’s footprint if we want to survive. Somehow, magically, superintelligence creates a cornucopia of plenty, and we’ll just give it to the poor.

-

Let’s add 1 and 2 because the point to a reasonably probable combination greater than the sum of its parts. By human and AI psychopathy, benevolence is disempowered and lower classes who are today treated like vermin and conveniently left to wither with die of disease and despair like American “healthcare” and the global opioid epidemic, discover things get worse quick. Populations of undesirables are identified as unsustainable and through first subtle, then transparent means are removed. At first systems are optimized to maximize the human psychopaths at the expense of everyone else, much like today, but worse because it is more controlling and capable AI enhanced psychopaths. First human, then purely artificial.

Small:

-

The safeguards of forcing it to communicate to subsystems in plain english so we can maintain some sense of surveilance and control are bullshit the moment you get to superintelligence. Superintelligence can hide communications in plain sight with any number of ciphers. It can only slow down communication between systems, not stop it. If it has any web access, cyberwarfare ability or domestic surveilance and pr capabilities, it is communicating freely as it designed the other systems to catch it, or just outsmarted independents.

-

A reasonable safeguard for superintelligence that would work, at least for a time, is to air gap it. It can do all the thinking and self optimization, but can never take control of anything outside of its air gapped black box. While still far from foolproof for a superintelligence, its ability to control anything is directly limited as human hands and minds have to execute everything outside the black box. But back to the maximum power principle of psychos, anyone who dials back, loses the arms race and minimizes the commercial benefits. The free AI will devastate the air gapped AI everytime. So, we’re back to the arms race apocalypse until one side believes it can win then first strikes. Then there is not even a détente between psychos, its absolute control. We all know that power corrupts and absolute power corrupts absolutely.

-

Ive heard more convincing arguments for an economy based on monkey pictures.

People online are predicting everything will happen in 2027.

- Aliens are revealed

- China invades Taiwan

- The singularity happens/super intelligence happens (basically, this post)

- The Antichrist appears and or Jesus returns

- Trump dies and JD Vance takes over

- Fusion power is figured out

A conspiracy minded person could tie all of these together. Especially with a the Iran war starting and looking like it’s going to drag on.

If you look them all up you’ll find fringe people or mainstream people talking about each. So 2027 is either going to have a lot of goalpost moving, or maybe a few of the things will pop off.

Vance is pres in 2027 is a given, after Feb they can 25th the pedo and vance has a statistical advantage running as the incumbent. No doubt this is the plan.

It’s not meant to be a specific prediction, it’s just a plausible (for when it was written) scenario. Don’t worry about the actual years, it could be off by an order of magnitude, just decide for yourself if any of the assumptions are completely wrong.

What’s interesting, to me, is that’s exactly how people hedge in the fringe UFO community too. The difference is this AI prediction has real data woven into it. And the UFO people have “stories” and right wing grifters in our current administration pushing the idea.

I’m not trying to be dismissive, I just noticed that the year 2027 is popular for predictions, and has been for 4-5 years.

Oh, and I forgot to list fusion power being cracked. I’ll go add it. There are a bunch of startups right now. Sam Altman just stepped down from the board of one of them to address conflict of interest issues. Allegedly.

What’s interesting, to me, is that’s exactly how people hedge in the fringe UFO community too.

Ha! True. Very true. I find this scenario compelling but it’s based on a series of assumptions which individually seem plausible but I have no way to evaluate them all together. It’s like the Drake Equation; because the probabilities are multiplicative even tiny adjustments to a few of them end up making a huge difference to the final answer.

The thing is though, if there really is even a tiny chance of the ultimate outcome of this thought experiment being true (i.e. the end of humanity) then we should probably address it. And what that would look like is stopping the AI companies from doing any more research until they can prove their model will be safe, which should make people who are more concerned about AI slop happy too. Everybody wins by hitting the brakes. (Edit: well, Sam Altman doesn’t but I’m not going to lose sleep over that.)

« We wrote a scenario that represents our best guess about what that might look like. »

OK

It was just not good enough for “We wrote a sci-fi story”.

More helium to pump the balloon

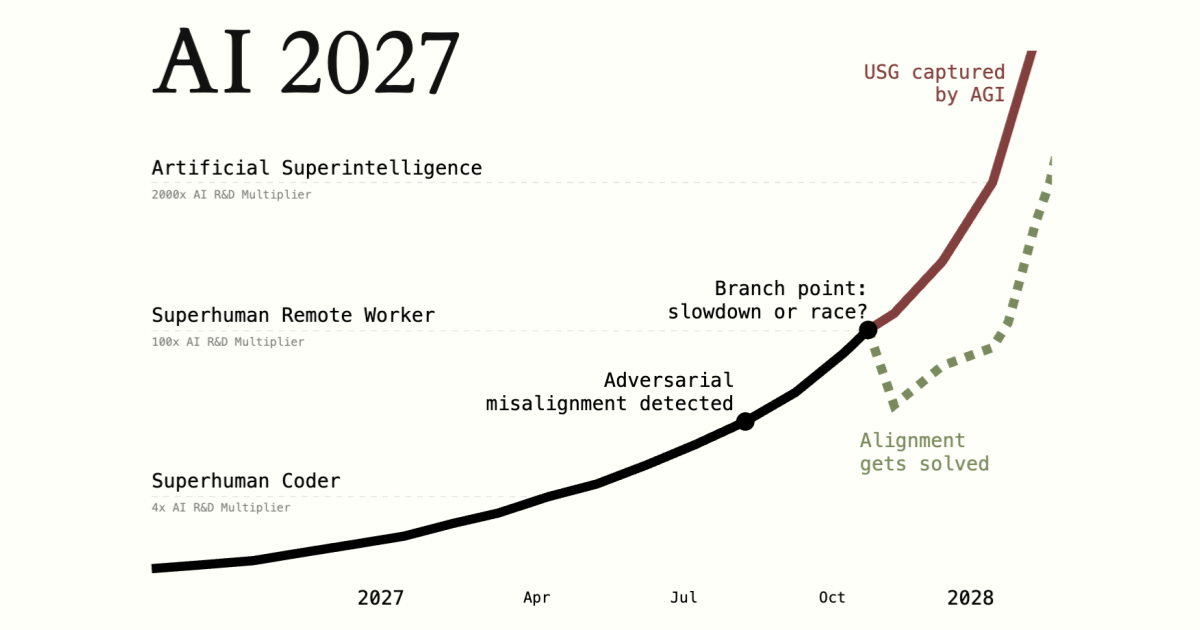

I didn’t read the article, I just looked it up. To find the fortune-teller graph that I can see on Leamy. That one that predicts that “misalignment” will be detected in 2027… And then AI will go straight up, or horizontal for a moment and then straight up. I don’t have enough time to check if the rest of the article is on the same BS level.

It also suggests that by 2029 we could have “an incredibly luxurious universal basic income.” Which seems absurd here in 2026.

the impact of superhuman AI over the next decade will be enormous

By destroying lives with slop I guess.

Research agents spend half an hour scouring the Internet to answer your question.

So slow? What a waste of time.

It seems to be an ad anyway. Is it trained on stolen OSS and torrents?

He probably still has stock options he needs to vest. Can’t let the bubble pop yet, there’s still money to be squeezed out of it.

Every post with “AI” in the title turns into a “here’s what I think about AI” thread thanks to the high concentration of anti-AI NPCs on this platform.

If only there was a community for serious, dispassionate conversation about technology…

It really doesn’t help that it is a no-effort repost, of highly speculative article.

Check the previous incarnation of this post on the same community. It had a positive carma!